背景

ORCA 是PostgreSQL的下一代优化器,在QUERY的优化上比自带的优化器有长足的进步。

https://github.com/greenplum-db/gporca

安装ORCA

cmake

wget https://cmake.org/files/v3.5/cmake-3.5.2.tar.gz

tar -zxvf cmake-3.5.2.tar.gz

cd cmake-3.5.2

./configure --prefix=/home/digoal/cmake

make

make install

export PATH=/home/digoal/cmake/bin:$PATHGPOS

cd ~

git clone https://github.com/greenplum-db/gpos

cd gpos

mkdir build

cd build

cmake -D CMAKE_BUILD_TYPE=RELEASE -D CMAKE_INSTALL_PREFIX=/home/digoal/gpos_home ../

make installgp-xerces

cd ~

git clone https://github.com/greenplum-db/gp-xerces

cd gp-xerces

mkdir build

cd build

../configure --prefix=/home/digoal/gp-xerces_home

make -j 32

make installgporca

cd ~

git clone https://github.com/greenplum-db/gporca.git

cd gporca

mkdir build

cd build

cmake -D CMAKE_BUILD_TYPE=RELEASE \

-D CMAKE_INSTALL_PREFIX=/home/digoal/gporca_home \

-D GPOS_INCLUDE_DIR=/home/digoal/gpos_home/include \

-D GPOS_LIBRARY=/home/digoal/gpos_home/lib/libgpos.so \

-D XERCES_INCLUDE_DIR=/home/digoal/gp-xerces_home/include \

-D XERCES_LIBRARY=/home/digoal/gp-xerces_home/lib/libxerces-c.so ../

make -j 32

make installgreenplum

greenplum的源码安装与集群初始化参考

https://yq.aliyun.com/articles/180

源码安装部分修改为

$ git clone https://github.com/greenplum-db/gpdb.git

$ cd gpdb

cp -r ~/gporca_home/include/gpopt ./

$ ./configure --prefix=/home/digoal/gp --enable-orca --with-perl \

--with-python --with-libxml \

--with-includes=/home/digoal/gporca_home/include:/home/digoal/gpos_home/include:/home/digoal/gp-xerces_home/include \

--with-libraries=/home/digoal/gporca_home/lib:/home/digoal/gpos_home/lib:/home/digoal/gp-xerces_home/lib

$ make -j 32

$ make install

$ ln -s /home/digoal/gp /home/digoal/greenplum-db

$ vi ~/env_gp.sh

GPHOME=/home/digoal/greenplum-db

# Replace with symlink path if it is present and correct

if [ -h ${GPHOME}/../greenplum-db ]; then

GPHOME_BY_SYMLINK=`(cd ${GPHOME}/../greenplum-db/ && pwd -P)`

if [ x"${GPHOME_BY_SYMLINK}" = x"${GPHOME}" ]; then

GPHOME=`(cd ${GPHOME}/../greenplum-db/ && pwd -L)`/.

fi

unset GPHOME_BY_SYMLINK

fi

PATH=$GPHOME/bin:$GPHOME/ext/python/bin:$PATH

LD_LIBRARY_PATH=$GPHOME/lib:$GPHOME/ext/python/lib:$LD_LIBRARY_PATH

PYTHONPATH=$GPHOME/lib/python

#PYTHONHOME=$GPHOME/ext/python

OPENSSL_CONF=$GPHOME/etc/openssl.cnf

export GPHOME

export PATH

export LD_LIBRARY_PATH

export PYTHONPATH

#export PYTHONHOME

export OPENSSL_CONF

export MASTER_DATA_DIRECTORY=/data01/digoal/gpdata/gpseg-1

export PGHOST=127.0.0.1

export PGPORT=1921

export PGUSER=digoal

export PGDATABASE=postgres

export LD_LIBRARY_PATH=/home/digoal/gpos_home/lib:/home/digoal/gp-xerces_home/lib:/home/digoal/gporca_home/lib:$LD_LIBRARY_PATH

# vi /etc/ld.so.conf

include ld.so.conf.d/*.conf

/home/digoal/gpos_home/lib

/home/digoal/gp-xerces_home/lib

/home/digoal/gporca_home/lib

/home/digoal/greenplum-db/lib

# ldconfig你也可以把orca安装到与greenplum一致的目录中,那SO就不需要设置到/etc/ld.so.conf了。

测试ORCA

重启数据库

$ gpstop -M fast -a

$ gpstart -a开启ORCA测试

$ psql

set client_min_messages='log';

set optimizer=on;

set optimizer_enumerate_plans=on;

set optimizer_minidump=always;

set optimizer_enable_constant_expression_evaluation=off;

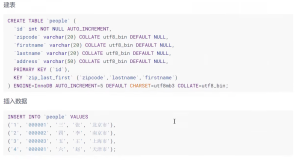

create table test(id int, info text, crt_time timestamp);

insert into test select generate_series(1,10000000), md5(random()::text),clock_timestamp();

\timing

insert into test select generate_series(1,10000000), md5(random()::text),clock_timestamp();

create index idx1 on test(info,crt_time);

create index idx2 on test(crt_time,info);

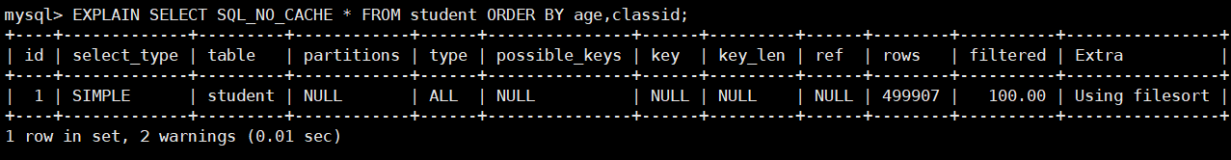

postgres=# explain analyze select * from test where info='abc' and crt_time between '2016-07-16 13:41:06.555882' and '2016-07-16 13:41:06.555882' order by crt_time limit 10;

LOG: statement: explain analyze select * from test where info='abc' and crt_time between '2016-07-16 13:41:06.555882' and '2016-07-16 13:41:06.555882' order by crt_time limit 10;

LOG: 2016-07-16 17:33:17:175315 CST,THD000,TRACE,"[OPT]: Number of plan alternatives: 8

",

QUERY PLAN

-----------------------------------------------------------------------------------------------------------------------------------------------------------------------------

Limit (cost=0.00..0.38 rows=1 width=45)

Rows out: 0 rows with 1.141 ms to end, start offset by 0.409 ms.

-> Gather Motion 16:1 (slice1; segments: 16) (cost=0.00..0.38 rows=1 width=45)

Merge Key: crt_time

Rows out: 0 rows at destination with 1.139 ms to end, start offset by 0.410 ms.

-> Sort (cost=0.00..0.38 rows=1 width=45)

Sort Key: crt_time

Rows out: 0 rows (seg0) with 0.065 ms to end, start offset by 1.099 ms.

Executor memory: 33K bytes avg, 33K bytes max (seg0).

Work_mem used: 33K bytes avg, 33K bytes max (seg0). Workfile: (0 spilling)

-> Index Scan using idx1 on test (cost=0.00..0.38 rows=1 width=45)

Index Cond: info = 'abc'::text

Filter: crt_time >= '2016-07-16 13:41:06.555882'::timestamp without time zone AND crt_time <= '2016-07-16 13:41:06.555882'::timestamp without time zone

Rows out: 0 rows (seg0) with 0.045 ms to end, start offset by 1.116 ms.

Slice statistics:

(slice0) Executor memory: 318K bytes.

(slice1) Executor memory: 209K bytes avg x 16 workers, 209K bytes max (seg0). Work_mem: 33K bytes max.

Statement statistics:

Memory used: 128000K bytes

Settings: optimizer=on

Optimizer status: PQO version 1.646

Total runtime: 5.807 ms

(22 rows)

postgres=# set enable_sort=off;

LOG: statement: set enable_sort=off;

SET

postgres=# explain analyze select * from test where info='abc' and crt_time between '2016-07-16 13:41:06.555882' and '2016-07-16 13:41:06.555882' order by crt_time limit 10;

LOG: statement: explain analyze select * from test where info='abc' and crt_time between '2016-07-16 13:41:06.555882' and '2016-07-16 13:41:06.555882' order by crt_time limit 10;

LOG: 2016-07-16 17:33:23:623812 CST,THD000,TRACE,"[OPT]: Number of plan alternatives: 8

",

QUERY PLAN

-----------------------------------------------------------------------------------------------------------------------------------------------------------------------------

Limit (cost=0.00..0.38 rows=1 width=45)

Rows out: 0 rows with 1.019 ms to end, start offset by 0.420 ms.

-> Gather Motion 16:1 (slice1; segments: 16) (cost=0.00..0.38 rows=1 width=45)

Merge Key: crt_time

Rows out: 0 rows at destination with 1.017 ms to end, start offset by 0.421 ms.

-> Sort (cost=0.00..0.38 rows=1 width=45)

Sort Key: crt_time

Rows out: 0 rows (seg0) with 0.081 ms to end, start offset by 1.047 ms.

Executor memory: 33K bytes avg, 33K bytes max (seg0).

Work_mem used: 33K bytes avg, 33K bytes max (seg0). Workfile: (0 spilling)

-> Index Scan using idx1 on test (cost=0.00..0.38 rows=1 width=45)

Index Cond: info = 'abc'::text

Filter: crt_time >= '2016-07-16 13:41:06.555882'::timestamp without time zone AND crt_time <= '2016-07-16 13:41:06.555882'::timestamp without time zone

Rows out: 0 rows (seg0) with 0.060 ms to end, start offset by 1.064 ms.

Slice statistics:

(slice0) Executor memory: 318K bytes.

(slice1) Executor memory: 209K bytes avg x 16 workers, 209K bytes max (seg0). Work_mem: 33K bytes max.

Statement statistics:

Memory used: 128000K bytes

Settings: enable_sort=off; optimizer=on

Optimizer status: PQO version 1.646

Total runtime: 7.194 ms

(22 rows)ORCA已经整合到阿里云推出的ApsaraDB Greenplum产品中,欢迎使用。

祝大家玩得开心,欢迎随时来阿里云 促膝长谈 业务需求 ,恭候光临。

阿里云的小伙伴们加油,努力做 最贴地气 的云数据库 。