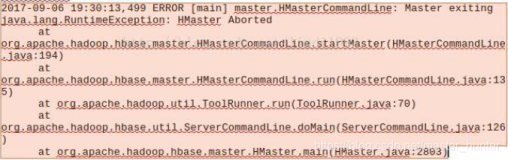

今天发现测试环境的kerberos hadoop的hive不能跑了,具体表现是select * limit这种不走mapred的job是ok的,走mapred的job就会报错,报的错比较奇怪(Unable to retrieve URL for Hadoop Task logs. Unable to find job tracker info port.)但是确认jobtracker是ok的,配置文件也是正常的,看来和jobtracker没有关系,进一步分析tasktracker的日志,发现如下错误。。

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

|

2014-03-26 17:28:02,048 WARN org.apache.hadoop.mapred.TaskTracker: Exception

while

localization java.io.IOException: Job initialization failed (24) with output: File

/home/test/platform

must be owned by root, but is owned by 501

at org.apache.hadoop.mapred.LinuxTaskController.initializeJob(LinuxTaskController.java:194)

at org.apache.hadoop.mapred.TaskTracker$4.run(TaskTracker.java:1420)

at java.security.AccessController.doPrivileged(Native Method)

at javax.security.auth.Subject.doAs(Subject.java:396)

at org.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:1407)

at org.apache.hadoop.mapred.TaskTracker.initializeJob(TaskTracker.java:1395)

at org.apache.hadoop.mapred.TaskTracker.localizeJob(TaskTracker.java:1310)

at org.apache.hadoop.mapred.TaskTracker.startNewTask(TaskTracker.java:2727)

at org.apache.hadoop.mapred.TaskTracker$TaskLauncher.run(TaskTracker.java:2691)

Caused by: org.apache.hadoop.util.Shell$ExitCodeException:

at org.apache.hadoop.util.Shell.runCommand(Shell.java:261)

at org.apache.hadoop.util.Shell.run(Shell.java:188)

at org.apache.hadoop.util.Shell$ShellCommandExecutor.execute(Shell.java:381)

at org.apache.hadoop.mapred.LinuxTaskController.initializeJob(LinuxTaskController.java:187)

... 8

more

|

其中/home/test/platform是mapred程序所在目录,通过更改/home/test/platform的属主为root解决,不过这个为什么需要是root用户呢

从调用栈信息看到,是在调用LinuxTaskController类(因为用到了kerberos,taskcontroller需要选择这个类)的initializeJob出错了。initializeJob方法是对job做初始操作,传入user,jobid,token,mapred的local dir等参数,生成一个数组,并调用ShellCommandExecutor的构造方法进行实例化,最终调用ShellCommandExecutor类的execute方法。

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

|

public

void

initializeJob(String user, String jobid, Path credentials,

Path jobConf, TaskUmbilicalProtocol taskTracker,

InetSocketAddress ttAddr

)

throws

IOException {

List<String> command =

new

ArrayList<String>(

Arrays.asList(taskControllerExe ,

//task-controller

user,

localStorage.getDirsString(),

//mapred.local.dir

Integer. toString(Commands.INITIALIZE_JOB.getValue()),

jobid,

credentials.toUri().getPath().toString(),

//jobToken

jobConf.toUri().getPath().toString()));

//job.xml

File jvm =

// use same jvm as parent

new

File(

new

File(System.getProperty(

"java.home"

),

"bin"

),

"java"

);

command.add(jvm.toString());

command.add(

"-classpath"

);

command.add(System.getProperty(

"java.class.path"

));

command.add(

"-Dhadoop.log.dir="

+ TaskLog.getBaseLogDir());

command.add(

"-Dhadoop.root.logger=INFO,console"

);

command.add(JobLocalizer.

class

.getName());

// main of JobLocalizer

command.add(user);

command.add(jobid);

// add the task tracker's reporting address

command.add(ttAddr.getHostName());

command.add(Integer.toString(ttAddr.getPort()));

String[] commandArray = command.toArray(

new

String[

0

]);

ShellCommandExecutor shExec =

new

ShellCommandExecutor(commandArray);

if

(LOG.isDebugEnabled()) {

LOG.debug(

"initializeJob: "

+ Arrays.toString(commandArray));

//commandArray

}

try

{

shExec.execute();

if

(LOG.isDebugEnabled()) {

logOutput(shExec.getOutput());

}

}

catch

(ExitCodeException e) {

int

exitCode = shExec.getExitCode();

logOutput(shExec.getOutput());

throw

new

IOException(

"Job initialization failed ("

+ exitCode +

") with output: "

+ shExec.getOutput(), e);

}

}

|

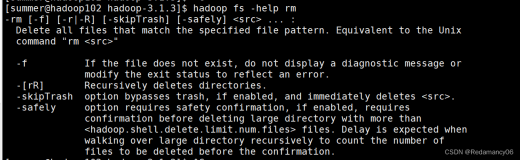

通过打开tasktracker的debug日志,可以获取commandArray的具体信息:

|

1

2

3

4

5

|

2014

-

03

-

26

19

:

49

:

02

,

489

DEBUG org.apache.hadoop.mapred.LinuxTaskController: initializeJob:

[/home/test/platform/hadoop-

2.0

.

0

-mr1-cdh4.

2.0

/bin/../sbin/Linux-amd64-

64

/task-controller,

hdfs, xxxxxxx,

0

, job_201403261945_0002, xxxxx/jobToken, xxxx/job.xml, /usr/local/jdk1.

6

.0_37/jre/bin/java,

-classpath,xxxxxx.jar, -Dhadoop.log.dir=/home/test/logs/hadoop/mapred, -Dhadoop.root.logger=INFO,console,

org.apache.hadoop.mapred.JobLocalizer, hdfs, job_201403261945_0002, localhost.localdomain,

57536

]

|

其中比较重要的是taskControllerExe 这个参数,它代表了taskcontroller的可执行文件(本例中是/home/test/platform/hadoop-2.0.0-mr1-cdh4.2.0/bin/../sbin/Linux-amd64-64/task-controller)

而execute方法其实最终调用了task-controller.

task-controller的源码在 src/c++/task-controller目录下。

在configuration.c中定义了对目录属主进行检查:

|

1

2

3

4

5

6

7

8

|

static

int

is_only_root_writable(

const

char

*file) {

.......

if

(file_stat.st_uid != 0) {

fprintf

(LOGFILE,

"File %s must be owned by root, but is owned by %d\n"

,

file, file_stat.st_uid);

return

0;

}

.......

|

如果检查的文件属主不是root,则报错。

调用这个方法的代码:

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

|

int

check_configuration_permissions(

const

char

* file_name) {

// copy the input so that we can modify it with dirname

char

* dir = strdup(file_name);

char

* buffer = dir;

do

{

if

(!is_only_root_writable(dir)) {

free

(buffer);

return

-1;

}

dir = dirname(dir);

}

while

(

strcmp

(dir,

"/"

) != 0);

free

(buffer);

return

0;

}

|

即check_configuration_permissions会调用is_only_root_writable方法对二进制文件所在目录向上递归做父目录属主的检查,如果有一个目录属主不为root,就会出错。这就要求整个chain上的目录属主都需要是root.

这其实是出于taskcontroller的安全考虑,在代码中定义了不少关于这个可执行文件的权限的验证,只要有一个地方设置不正确,tasktracker都不会正常运行。

cloudra官方文档对这个文件的权限描述如下:

|

1

2

3

4

5

6

|

The Task-controller program is used to allow the TaskTracker to run tasks under the Unix account of the user

who

submitted the job

in

the first place.

It is a setuid binary that must have a very specific

set

of permissions and ownership

in

order to

function

correctly. In particular, it must:

1)Be owned by root

2)Be owned by a group that contains only the user running the MapReduce daemons

3)Be setuid

4)Be group readable and executable

|

问题还没有结束,taskcontroller有一个配置文件为taskcontroller.cfg.关于这个配置文件位置的获取比较让人纠结。

搜到有些文档说是通过设置HADOOP_SECURITY_CONF_DIR即可,但是在cdh4.2.0中,这个环境变量并不会生效,可以通过打patch来解决:

https://issues.apache.org/jira/browse/MAPREDUCE-4397

默认情况下,目录取值的方法如下:

|

1

2

3

4

5

6

7

8

9

|

#ifndef HADOOP_CONF_DIR //如果编译时不指定HADOOP_CONF_DIR的值,没调用infer_conf_dir方法。

conf_dir = infer_conf_dir(argv[0]);

if

(conf_dir == NULL) {

fprintf

(LOGFILE,

"Couldn't infer HADOOP_CONF_DIR. Please set in environment\n"

);

return

INVALID_CONFIG_FILE;

}

#else

conf_dir = strdup(STRINGIFY(HADOOP_CONF_DIR));

#endif

|

其中infer_conf_dir方法如下,即通过获取二进制文件的相对路径来得到配置文件的存放目录,比如我们线上执行文件的位置为/home/test/platform/hadoop-2.0.0-mr1-cdh4.2.0/bin/../sbin/Linux-amd64-64/task-controller,配置文件的位置为

/home/test/platform/hadoop-2.0.0-mr1-cdh4.2.0/conf/taskcontroller.cfg:

|

1

2

3

4

5

6

7

8

9

10

11

|

char

*infer_conf_dir(

char

*executable_file) {

char

*result;

char

*exec_dup = strdup(executable_file);

char

*dir = dirname(exec_dup);

int

relative_len =

strlen

(dir) + 1 +

strlen

(CONF_DIR_RELATIVE_TO_EXEC) + 1;

char

*relative_unresolved =

malloc

(relative_len);

snprintf(relative_unresolved, relative_len,

"%s/%s"

,

dir, CONF_DIR_RELATIVE_TO_EXEC);

result = realpath(relative_unresolved, NULL);

// realpath will return NULL if the directory doesn't exist

.......

|

关于taskcontrol相关类的实现放在后面的文件讲解。

本文转自菜菜光 51CTO博客,原文链接:http://blog.51cto.com/caiguangguang/1385587,如需转载请自行联系原作者